Microsoft Copilot bug exposed confidential emails to AI processing

Microsoft has acknowledged a significant flaw in its Microsoft 365 Copilot AI assistant that allowed the system to process and summarise emails labelled as confidential, bypassing established data protection controls. The issue, tracked internally as CW1226324, emerged from a coding defect in the Copilot “Work” tab that inadvertently pulled content from users’ Sent Items and Drafts folders — even when those messages carried sensitivity labels and were […] The article Microsoft Copilot bug exposed confidential emails to AI processing appeared first on Arabian Post.

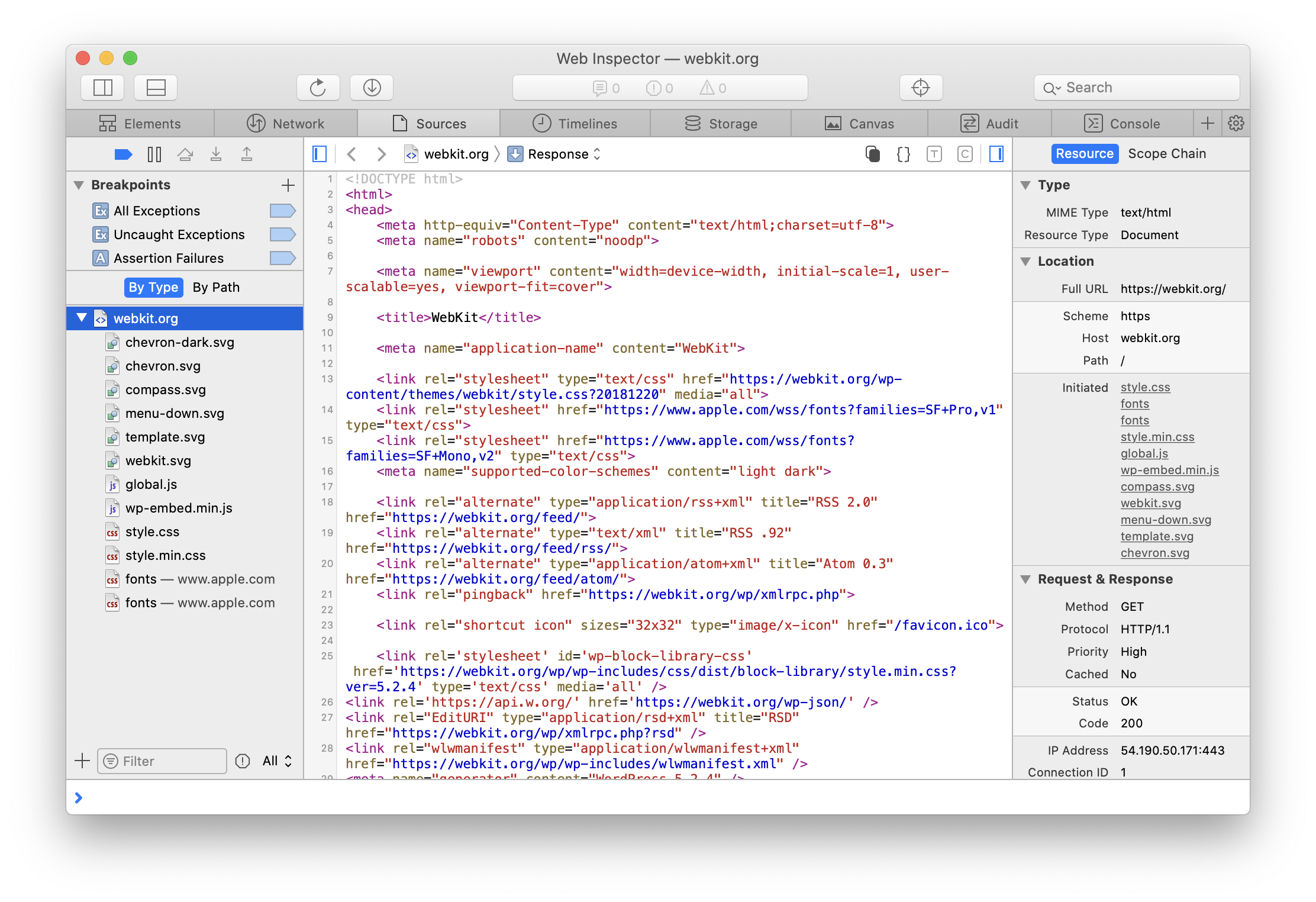

Microsoft has acknowledged a significant flaw in its Microsoft 365 Copilot AI assistant that allowed the system to process and summarise emails labelled as confidential, bypassing established data protection controls. The issue, tracked internally as CW1226324, emerged from a coding defect in the Copilot “Work” tab that inadvertently pulled content from users’ Sent Items and Drafts folders — even when those messages carried sensitivity labels and were protected by data loss prevention policies designed to prevent such access.

The glitch was first identified internally around 21 January and persisted into February, prompting Microsoft to roll out a fix from early in the month while actively monitoring and contacting customers as the patch was deployed. Though the company maintains that no unauthorised party gained access to protected data, the behaviour nonetheless represented a breach of the intended governance mechanisms within Microsoft 365 Copilot.

Microsoft 365 Copilot is an AI tool embedded across the productivity suite, including Outlook, Word, Excel, PowerPoint and OneNote, designed to help users draft content, find information and summarise emails and documents. Copilot relies on sensitivity labels and DLP configurations to ensure that confidential content remains shielded from automated processing. However, the fault in the code base meant that those protective signals were overlooked for certain email folders, allowing the AI to ingest material marked as confidential despite organisational safeguards.

According to the service advisory, the defect did not extend to user inboxes outside the specified folders, and Microsoft has emphasised that only users already authorised to view the emails could see their content through Copilot’s summarisation. Nevertheless, security professionals raised concerns that the incident illustrated limits in how AI-driven systems enforce policy controls, particularly when access pathways and retrieval logic intersect.

Cybersecurity analysts pointed out that because the sensitivity labels and DLP policies are ordinarily tasked with preventing automated access by AI tools such as Copilot, the failure to honour those restrictions raised broader questions about governance and compliance. One expert highlighted that without independent verification layers outside the primary AI platform, organisations struggle to detect and audit when protected data is being processed in violation of their own rules.

Microsoft’s statement indicated that the company believes the root cause has been addressed for most customers, with the configuration update reaching the majority of affected environments. However, the full timeline for remediation remains under evaluation as Microsoft continues telemetry monitoring and outreach to enterprise customers to confirm the effectiveness of the fix. The vendor has not disclosed specific numbers regarding the scale of affected users or organisations.

Business and IT leaders expressed mixed reactions to the development. Some noted that bugs happen in complex software systems and that the advisory status of the issue suggested relatively limited impact. Others argued that the incident could erode confidence in AI-integrated tools among privacy-conscious organisations, particularly those in regulated sectors such as healthcare and finance where misclassification or exposure of protected information could trigger compliance ramifications.

Industry watchers also flagged the timing of the bug against a backdrop of intensifying scrutiny of AI’s role in workplace systems. Regulatory bodies in Europe and beyond have been debating stricter governance frameworks for AI, underscoring requirements for data protection, accountability and transparency. The Microsoft Copilot episode, in their view, underscored the real-world challenges of integrating generative AI into daily workflows while maintaining robust privacy controls.

Security practitioners underscored that sensitivity labels and DLP policies, while crucial, are part of a layered defence strategy and should be complemented with independent monitoring and verification to guard against unexpected behaviours by complex AI systems. They emphasised that organisations must stay vigilant as embedded AI expands across software ecosystems, ensuring that protective mechanisms are both correctly configured and regularly tested for effectiveness in evolving threat landscapes.

The article Microsoft Copilot bug exposed confidential emails to AI processing appeared first on Arabian Post.

What's Your Reaction?